MuseCoco: Generating Symbolic Music from Text

Abstract

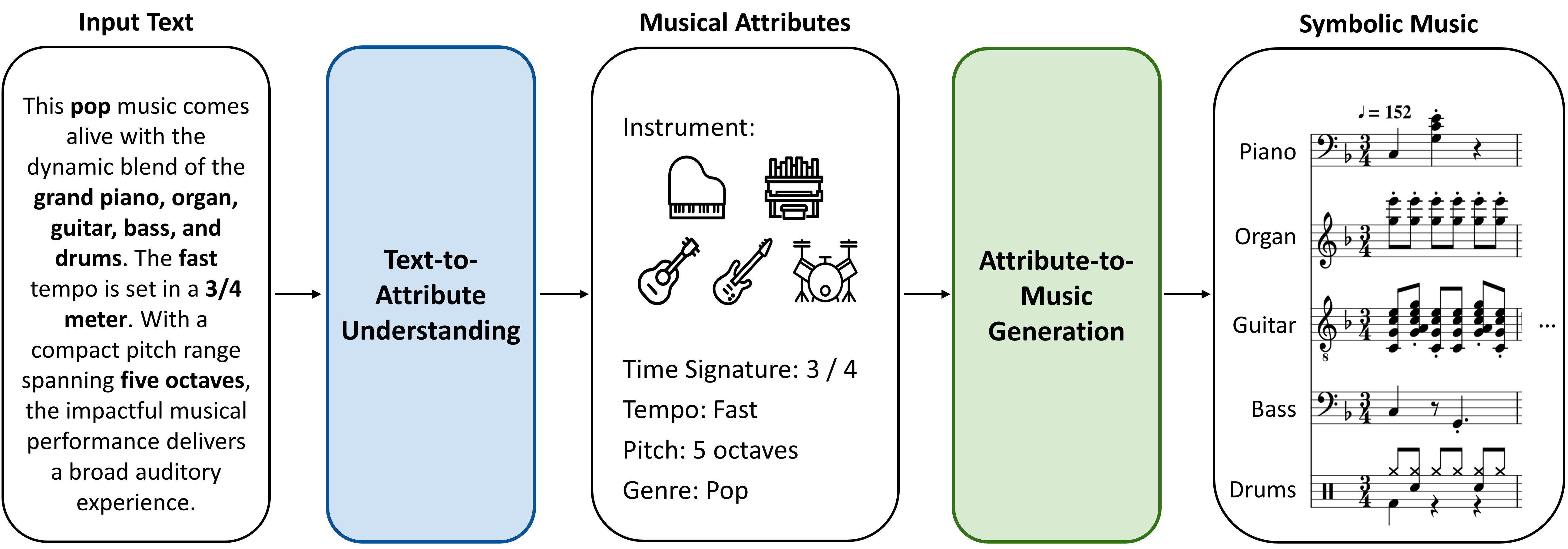

Due to the inherent ease of textual input for user engagement, it is natural to generate music from text. In this paper, we introduce MuseCoco (Music Composition Copilot), a system meticulously designed to compose symbolic music from text descriptions. It operates by utilizing musical attributes as a bridge and dividing the process into text-to-attribute understanding stage and attribute-to-music generation stage, which bestows three key advantages: First, it eliminates the need for paired text-to-music data, as text-attribute pairs for the text-to-attribute understanding stage can be automatically synthesized as many as needed, and attributes required for the attribute-to-music generation stage can be directly extracted from music data, thus alleviating the labor-intense process of human annotation. Second, thanks to the explicit attribute design, the system excels in offering precise control over musical attributes, which ensures a high degree of accuracy in shaping the musical output according to user's intentions. Third, it can offer an additional attribute-conditioned control option beyond textual input, enhancing its versatility and usability. Our experimental results demonstrate that MuseCoco significantly outperforms our top-performing baseline model, GPT-4, on musicality, controllability, and overall score, by 45.5%, 35.2%, and 47.0%, respectively. There is also a notable enhancement of approximately 20% in objective control accuracy. Additionally, we have developed a large-scale model with 1.2 billion parameters, showcasing exceptional controllability and musicality. In practical applications, MuseCoco can serve as a user-friendly tool for musicians, enabling them to effortlessly generate music by simply providing text descriptions, and offering a substantial enhancement in efficiency compared to manually composing music from scratch.

Figure 1: The two-stage framework of MuseCoco. Text-to-attribute understanding extracts diverse musical attributes, based on which symbolic music is generated through the attribute-to-music generation stage.

Please note: this work focuses on generating symbolic music, which do not contain timbre information. We use MuseScore (https://musescore.org/en) to export mp3 files for reference, musicians are encouraged to leverage the .mid files for further timbre improvement.

Comparison with Baselines

Group 1

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated sample from BART demonstrates inadequate control over attributes such as time signature, duration, and octaves. Furthermore, the sample's musicality suffers due to a lack of harmonies, resulting in an incomplete composition.

GPT-4: The generated music from GPT-4 is controlled well. However, it tends to sound monotonous since the rhythm patterns lack of variations, which is common in most generated music from GPT-4. Furthermore, some counterpoints in the composition, specifically in the 4th and 10th bars, lack well-designed integration, detracting from the overall quality of the piece.

MuseCoco: The generated music from MuseCoco is controlled precisely. Besides, it has more complex harmonies and more diverse rhythm patterns for a better listening experience.

Group 2

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated music suffers from inaccuracies in its control of time signature, duration, octaves, and note density. Furthermore, the melody line lacks variation, resulting in a monotonous composition with repetitive rhythm patterns and harmonic progressions.

GPT-4: The generated music lacks precision in controlling duration, octaves, and note density. As a result, the composition suffers from a monotonous melody characterized by simplistic rhythms and a lack of harmonies.

MuseCoco: The generated music demonstrates precise control, particularly notable in the dense arrangement of notes. This intricate composition style contributes to its memorability and adds an interesting and captivating quality to the music.

Group 3

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated music deviates from the attributes specified in the text description, such as time signature, duration, and octaves. Furthermore, the style of the music does not resemble that of Chopin. Additionally, the abnormal usage of rests within each voice part raises doubts about the composition's coherence and musicality.

GPT-4: The generated music falls short in multiple aspects. It does not span 4 octaves as specified, and it fails to capture the essence of Chopin's style. The melody lacks variation, resulting from limited rhythm patterns and harmonies. Additionally, the inclusion of two identical tracks introduces redundancy and detracts from the overall composition.

MuseCoco: The generated music demonstrates precise control. Specifically, the 5th and 6th bars skillfully capture the distinct essence and emotive qualities associated with Chopin's style, effectively conveying the desired musical expression.

Group 4

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated music is monophonic with the piano and lacks the bass, voice, flute and drum. And it has the wrong time signature of 6/8.

GPT-4: The generated music is in the incorrect pitch range (1 octaves) and tempo (very slow). And its melody is monotonous and repeated.

MuseCoco: The generated music from MuseCoco is controlled precisely. The moderato chanting presents a peaceful religious style.

Group 5

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated music lasts too long with a monophonic melody and does not resemble the style of Brahms.

GPT-4: The tempo of generated music from GPT-4 is too slow and the pitch ranges less in one octave, which are not corresponding to the text. And the melody is too simple to relate to Brahms.

MuseCoco: The generated music demonstrates precise control. Specifically, it is of the 4/4 time signature, in minor and with a fast tempo. The development of the multi-layed piano shows the Brahms' style.

Group 6

| BART | GPT-4 | MuseCoco |

|---|---|---|

Comments:

BART-base: The generated music is too long to be corresponding with the text. And it consists of discrete notes and a boring melody, which can not express the classical style of Schubert.

GPT-4: The pitch range of generated music is limited in only one octave. It simple melody lacks the classical style from Schubert.

MuseCoco: The generated music is controlled perfectly. It utilizes the piano with a moderate tempo in major, which presents a relaxed style of the 3/4 meter like the Schubert's minuetto.

Generation Diversity

We test the generation diversity with the same text conditions.Sample 1

Text Description:

Music is representative of the typical pop sound and spans 13 ~ 16 bars, this is a song that has a bright feeling from the beginning to the end. This song has a very fast and lively rhythm. The use of a specific pitch range of 3 octaves creates a cohesive and unified sound throughout the musical piece.

Generated Samples:

Explanations:

Those generated music samples exhibit the characteristics of typical popular music. Each sample lasts approximately 16 bars and has distinct tempos of 114 BPM, 102 BPM, and 108 BPM respectively. The melodies span approximately 4 octaves, contributing to the overall range of the piece. Throughout the composition, a major chord progression is employed, creating a consistent and uplifting atmosphere from start to finish. This tonal choice effectively captures the essence of a beautiful, bright day accompanied by a clear blue sky, evoking a sense of positivity and joy.

Sample 2

Text Description:

3/4 is the time signature of the music. This song is unmistakably classical in style. This music is low-tempo. The use of piano is vital to the music.

Generated Samples:

Explanations:

Those generated music follow a 3/4 meter with a slow tempo of around 60-70 BPM. The use of piano, harmonic progression and use of counterpoints features its classical styles.

Sample 3

Text Description:

The flute creates a lively and upbeat atmosphere in this fast-paced song with just the right tempo. The time signature of this song is not usual. The music is enriched by oboe, trumpet, flute and tuba.

Generated Samples:

Explanations:

In the generated samples, oboe, trumpet, flute, and tuba are utilized, with the flute playing a prominent role. The music features an uplifting melody, harmonies derived from major chords and their extensions, and a lively rhythm. Notably, the music deviates from the common-practice tradition by employing the unusual 2/2 time signature.

Sample 4

Text Description:

The song's upbeat melody and swift pace, paired with its 3 octave pitch range and 3/4 time signature, offers a diverse and dynamic listening experience. Strings, drum, clarinet and flute are utilized in the musical performance. The song's length is around about 14 bars.

Generated Samples:

Explanations:

In addition to the suggested instrumentation, the generated samples feature additional instruments such as the harp, enhancing the expressiveness of the music. These samples adhere to the given specifications, spanning 4 octaves in range, utilizing a 3/4 time signature, maintaining a fast tempo, and consisting of 14 bars. These musical choices contribute to the overall character and energy of the compositions, creating an engaging and dynamic listening experience.

Sample 5

Text Description:

This song has a steady and moderate rhythm. The music is given its sound through saxophone, drum and trombone. You can hear about 14 bars in this song. 2/4 is the time signature of the music.

Generated Samples:

Explanations:

Controlled by Specific Attributes

The model can also take the specific attribute values rather than text descriptions as the conditions to generate the corresponding music.| Attribute Value | Generated Samples |

|---|---|

|

Rhythm Intensity: moderate Bar: 16 Time Signature: 4/4 Tempo: moderato Time: 30-45s | |

|

Instrument: violin Rhythm Intensity: intense Tempo: slow Time: >60s Artist Style: Bach | |

|

Instrument: piano drum bass Tempo: moderato |

Description Length

This section demonstrates the model ability to handle differenet description length.Short Descriptions

Long Descriptions

Comparison with MusicLM

While MusicLM primarily focuses on generating musical audio from text descriptions, a distinct contrast to our work which involves generating symbolic music from similar textual cues, we find value in presenting comparative results with MusicLM. This allows us to effectively highlight the strengths and limitations of both approaches.

The samples of MusicLM are generated with this link: https://aitestkitchen.withgoogle.com/experiments/music-lm

Comparison 1

| MusicLM | MuseCoco |

|---|---|

Comments: The musicality of the samples from MusicLM is close to live performance. However, it features 4/4 in time signature instead of 3/4 described in texts. The genre is more like jazz rather than classical.

Comparison 2

| MusicLM | MuseCoco |

|---|---|

Comments: The sample produced by MusicLM demonstrates commendable musicality, featuring a discernible yet somewhat subtle melody line. Notably, the time signature of the generated sample (4/4) differs from the provided description (3/4). Furthermore, it is noteworthy that the generated samples lack the presence of strings and flutes, elements explicitly outlined in the provided text.

Comparison 3

| MusicLM | MuseCoco |

|---|---|

Comments: Both samples exhibit commendable control, but the MusicLM sample leaning more toward a major key tonality (should be minor in descriptiions). Additionally, the MusicLM-generated music resonates with a live performance quality, characterized by noticeable rubato, whereas the MuseCoco sample adheres to a more structured composition style.

Comparison 4

| MusicLM | MuseCoco |

|---|---|

Comments: Both samples are controlled well and have a good musicality performance.

Conclusion: The end-to-end methods encounter challenges: 1) They need lots of paired data, which is hard and costly to gather. 2) These methods compress control details into a single vector, making precise control tough. We tested controlling MusicLM (an end-to-end model) with our dataset and found it struggles with fine details like tempo and meter. Unlike end-to-end methods, our work emphasizes the composition process. It empowers users to finely control musical attributes during music creation, aligning with the iterative and expressive nature of composition. This dynamic collaboration between human creativity and AI assistance enriches the environment for music composition.